A series of fake videos, AI‑cloned audio clips, and dozens of forged letters on RSS letterhead have flooded social media, each designed to provoke political outrage

It felt explosive, because the allegations were huge. But soon after, a couple of fact‑checkers dug deeper and announced that the whole thing was a deepfake. The audio had been cloned, the visual stitched together, and the context deliberately twisted. The government stepped in, issued a clarification and officially busted the fake narrative. I felt a mix of relief – the lie was exposed – and worry, because it showed how easily such misinformation can spread.

What shocked me even more was learning that this wasn’t a one‑off stunt. A senior RSS functionary told me (and I later saw it quoted in a news piece) that this video was just the tip of a massive disinformation iceberg. In the months that followed, a whole series of fake videos, AI‑cloned audio clips, and dozens of forged letters on the RSS letterhead started popping up. Each of them seemed crafted to stir political outrage, twist public perception and, frankly, create chaos.

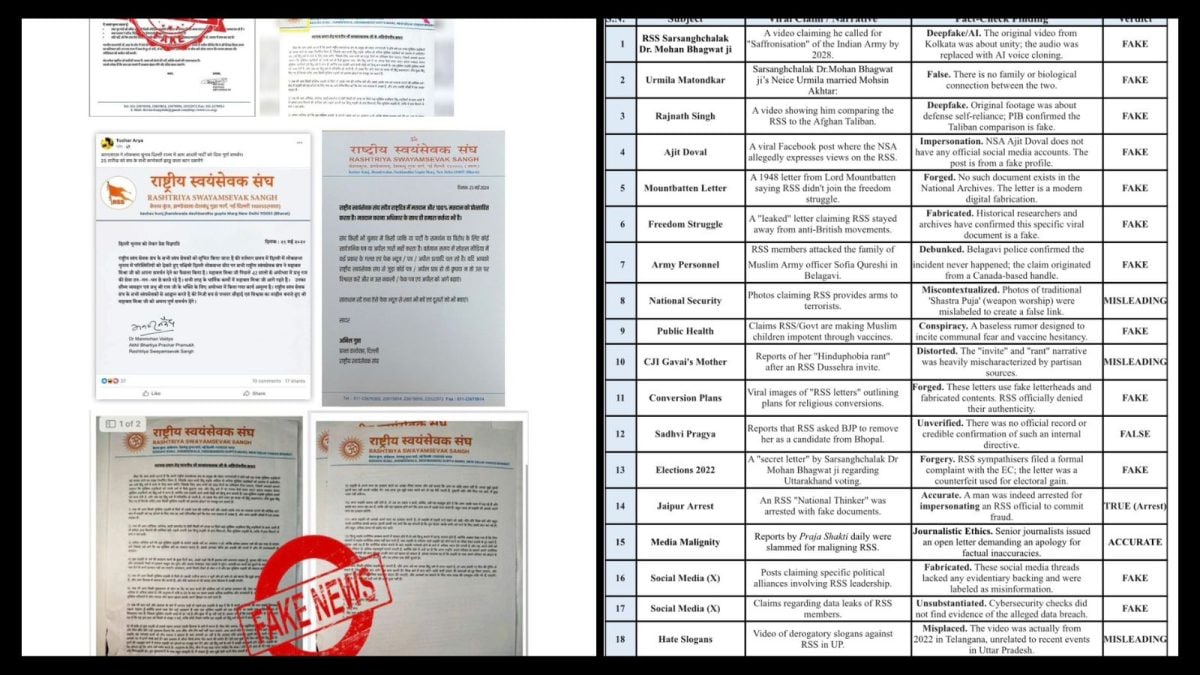

The pattern was clear. From fabricated letters addressed to PM Modi, to fake statements involving Rahul Gandhi, the content used official‑looking formats, viral‑ready headlines and was timed around sensitive moments – like state elections or a heated parliamentary debate. Even leaders like Rajnath Singh were dragged into these deepfakes, while impostor accounts imitated figures such as Ajit Doval to lend false credibility.

The Letterhead Conspiracy

The objective, as the RSS source explained, had moved beyond simple misinformation. It had become a large‑scale narrative disruption. The organisation is now actively flagging the fake letters as AI‑generated, filing legal complaints and warning that its name is being systematically misused.

“In the current hyper‑accelerated information cycle, misinformation is no longer random noise. It is engineered. Carefully timed, politically loaded, and digitally sophisticated, these campaigns aim not just to mislead but to destabilise narratives before facts can catch up. And for context, one can notice how such deepfakes or AI‑made documents increase before the assembly or Lok Sabha elections. So clearly, these are not random things,” said the senior functionary.

Later, a series of fabricated ‘policy’ notes emerged – one on religious reservation, another outlining a conversion strategy, and yet another hinting at internal dissent within the Sangh. Add to that fake advisories on elections, fabricated surveys, minority‑outreach plans and even a bogus national‑security brief, and a clear pattern emerges. Every document shared the same footprint: an official‑looking letterhead, bureaucratic language, and just enough plausibility to go viral.

The Fake Letter Factory

Beyond videos, the more insidious tool has been the forged letters on RSS letterhead. Below are eight such fabricated communications that have been seen in recent months, each designed to trigger a political shockwave:

- A fake letter to PM Narendra Modi, allegedly from Bhagwat, questioning Assam politics and indirectly targeting Himanta Biswa Sarma.

- A fabricated note praising Rahul Gandhi as a future leader.

- A forged directive on “religious reservation”, falsely attributed to the Sangh.

- A conversion‑strategy document, outlining non‑existent plans for religious mobilisation.

- A letter on election interference, allegedly guiding voting patterns.

- A communication on internal dissent, projecting cracks within the organisation.

- A policy note on minority outreach, crafted to appear controversial.

- A ‘strategic advisory’ on national security, misusing institutional tone and format.

Seeing these letters pop up in my newsfeed made me wonder how many people were being fooled. Some looked so authentic that even seasoned journalists paused before sharing.

RSS Pushback

According to sources, both Delhi Police and Assam Police have investigated these incidents. A few individuals – some reportedly linked to the Congress student wing (NSUI) – were arrested for creating and circulating the fake letters. RSS sympathisers have lodged complaints with the cyber cell of the Crime Branch and the Election Commission, warning that such fake content aims to disturb public harmony, said a senior RSS functionary.

The response has moved beyond statements. Multiple FIRs have been registered, agencies are tracking the origin of doctored videos and forged documents, and arrests have been made in select cases by Delhi and Assam police, signalling that the crackdown is underway. Investigators are increasingly pointing to organised networks that leverage AI tools to mass‑produce and circulate such content at speed.

Alongside videos and letters, sources within the organisation have also flagged a rise in ‘fabricated’ news articles and opinion pieces, published on obscure portals or circulated as screenshots, falsely attributing positions, internal rifts, or policy stances. The RSS functionaries maintain this is not isolated misinformation but a structured campaign to distort public discourse. As elections draw closer, the battle is clearly expanding, from the ground to digital, where credibility itself is under attack.

“The most effective long‑term response will combine technological safeguards such as mandatory metadata embedding, watermarking, and AI‑generated content labeling by the AI agents or applications with behavioural interventions. This may encourage users to ponder over, especially when content is emotionally charged or urgent, and remains a critical line of defence. Deepfakes thrive in low‑trust environments,” added Sandhu.

Narrative Warfare

Deepfake speeches have also targeted leaders like Rajnath Singh. Fake social‑media profiles impersonate figures such as Ajit Doval. Old videos are repurposed, and incidents from one state are passed off as another to inflame tensions. The timing is rarely accidental. These waves peak around elections and politically sensitive moments, when public opinion is most vulnerable.

“What is needed now is a comprehensive structural program along with a centralised governing body with clear jurisdiction, standardised complaint mechanisms, and the authority to mandate platform compliance. This will encourage people to pause before sharing. The framework should include requirements for traceability, rapid takedown processes, and accountability across the entire content lifecycle,” said Abhijit Tripathy, another senior industry expert involved in cyber security and forensic analysis.

“From a cybersecurity lens, users act as the first line of defence, but only at a basic level. They can look for anomalies like mismatched audio‑video sync, unnatural facial movements, or inconsistencies in documents. Verifying content through trusted sources and official channels is critical. Simple tools like reverse image search or checking metadata can help flag suspicious content. However, highly sophisticated deepfakes may bypass human detection, which is why awareness is key,” added Tripathy.

Whenever I hear about such fake clips, I try to do a quick check – pause the video, watch the lip movements, maybe run a reverse‑image search on a frame. It’s not foolproof, but it’s something we can all do while scrolling through our feeds at the tea stall.