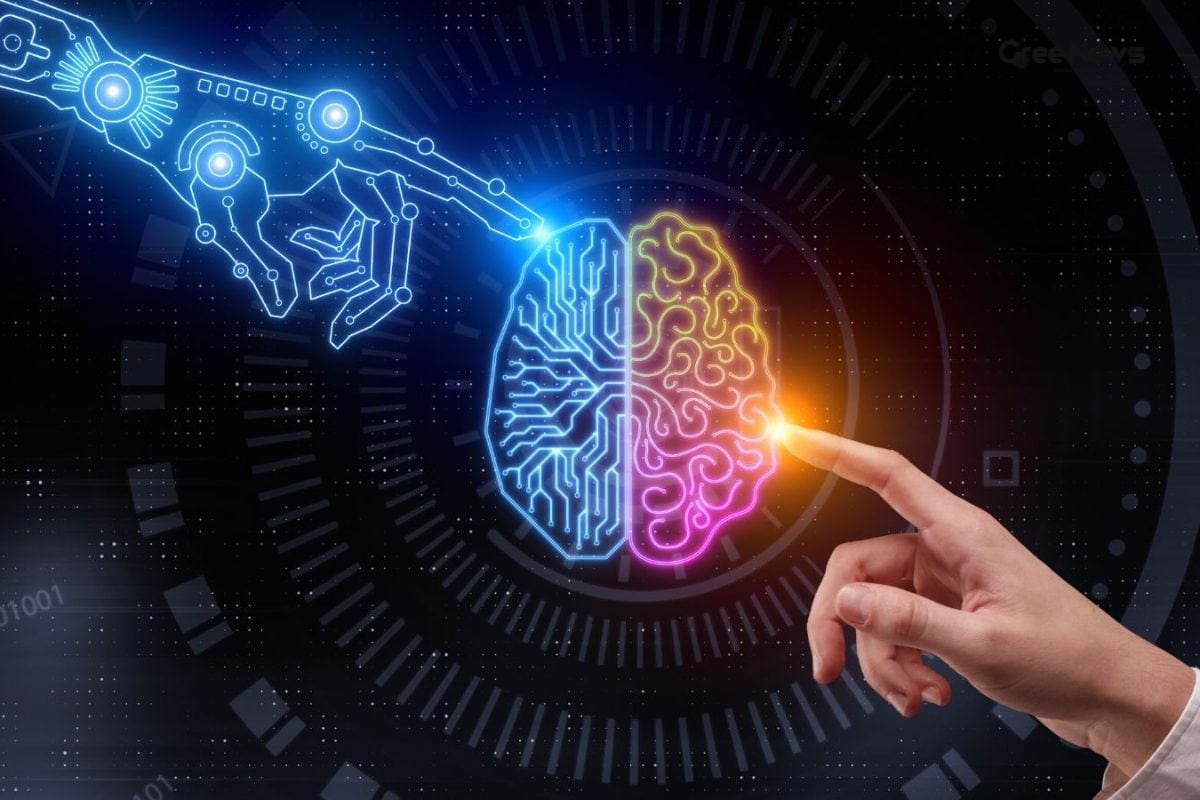

When I was sitting in a risk‑review meeting at a big financial house, something happened that still haunts me. A junior analyst had just fed a counter‑party exposure problem to an AI system. The output looked sleek: well‑structured, hedged just right, and written in the exact tone we use when talking about credit risk. He walked us through it with a confidence that screamed "I got it right".

Two things went down instantly. First, the senior risk officers in the room couldn’t pinpoint what was wrong with the analysis. Second, they all feltwithout naming itthat something was off. The silence that followed was the kind you can almost feel, thick and a little uncomfortable. Then a senior officer finally pulled on a thread, and in about ninety seconds the whole thing fell apart. I’ve been replaying those ninety seconds ever since.

That moment made me realise the current breaking news around AI isn’t about robots stealing jobs. It’s about how perception shifts in real‑time, often faster than any formal policy can catch up.

The public debate is asking the wrong question

Most of the talks you see on the latest news India or even viral news pieces ask the dramatic question: "Will AI replace doctors, lawyers, engineers?" Those headlines catch attention, but they oversimplify a far messier reality. The real transformation is already happening in rooms like that risk‑review chamber, and it works not by making expertise obsolete but by thinning the social distance that used to make expertise visible.

Think about it a senior risk officer doesn’t just have more data than a junior analyst. Over years she has internalised patterns from actual failures. She can sense a suspicious number before she can even say why. That intuition lives below the level of conscious explanation; it’s a kind of muscle memory for the brain, forged by real stakes and real consequences.

Large language models, on the other hand, learn the surface the jargon, the hedges, the polished structure of a professional judgment. Because they are tuned against human evaluators, they get better at sounding plausible. Plausibility, however, isn’t the same as correctness. When you’re sitting in a meeting without deep domain knowledge, a confident‑sounding answer can look just as right as a seasoned professional’s gut feeling. That’s the crux of many trending news India stories about AI missteps.

What expertise actually looks like

Expertise isn’t a checklist of facts. It’s a web of heuristics built over decades. A senior risk officer knows which pattern of numbers signals a hidden risk, even if she can’t immediately articulate the rule. Those heuristics are not stored in any database they’re lived experience.

When an AI system produces a well‑structured analysis, it’s mimicking the language of that expertise, not the deep‑seated intuition behind it. The system optimises for what makes people nod in aGreement, not for what makes the decision truly sound. That subtle difference is why many professionals feel a creeping loss of legibility the audience can no longer tell the difference between a real expert and a synthetic replica.

In the financial world, that loss of legibility can be dangerous. Imagine a regulator reviewing a report that looks perfect on paper but hides a structural flaw that only a seasoned analyst would have sensed. The regulator may never know why the flaw was missed because the AI has made the report look so polished.

The clock‑tower analogy

Before mechanical clocks, time was a local negotiation. The guild master’s word on when the workday started carried authority because he controlled the measurement. When clocks appeared in European towns during the 14th and 15th centuries, they didn’t just make time more accurate they redistributed who could know what time it was.

The power shift was abrupt. Workers no longer relied on a master’s word; everyone could look at the same tower and know the hour. That didn’t happen smoothly there were riots, labour disputes and a whole century of social re‑organisation. The technology itself was neutral; the disruption came from the social structures that had to adjust.

That story is a perfect mirror for today’s AI boom. The technology gives us a new way to ‘measure’ expertise a language model that can write a perfect‑sounding risk assessment. The real question is not "Will the clock replace the master?" but "How will society re‑organise when everyone can produce a clock‑like analysis?" The answer, as history shows, is messy and takes generations.

What senior practitioners are really worried about

During my research across financial services and tech‑governance bodies, I kept hearing the same anxiety: it’s not about automation per se. It’s about losing the audience’s ability to see the gap between an expert and a non‑expert. Boards, regulators and clients start believing that a coherent, confident AI‑generated report is as trustworthy as a seasoned professional’s judgment, simply because it looks the part.

Many senior officers told me they were not afraid of being replaced. Their deeper fear was that their expertise would become invisible that the ‘license to interpret’ would no longer be recognisable. When the gap shrinks, the whole professional licensing and credentialing system starts to look like a ritual rather than a real safeguard.

This is where the viral news angle sneaks in. Headlines may shout about AI‑driven mistakes, but the quieter, more insidious drift is that millions of small decisions start to hinge on plausible‑but‑wrong outputs, subtly reshaping behaviour long before anyone realises the pattern.

The failure mode we should watch

The scary scenario isn’t a single catastrophic error that makes headlines. It’s a slow creep of plausible analyses that are technically wrong in ways that are hard to detect. Those errors blend into everyday decisions think of a loan officer approving a borderline case because the AI says the risk looks “acceptable”. Over thousands of such cases, the system starts nudging the whole market in a direction nobody intended.

Because the output looks legit, it rarely triggers alarm. The real danger is the erosion of deference people stop asking “why?” because the answer feels authoritative enough. That erosion happens long before the data is compiled to point out the problem.

Building the next generation of judgment

What survives in this new landscape isn’t a credential; it’s the ability to interrogate a confident answer. It’s about spotting the hidden assumptions that an AI quietly carries past you, and asking the uncomfortable question that pushes the system to its edge.

These skills can be taught, but they’re not what we usually select for in hiring, nor are they what AI tools are built to develop in their users. The senior officer who unraveled the AI‑driven analysis did it because she spent twenty years learning to distrust fluency. She’d once been wrong herself, and that memory that personal accountability for outcomes became her safeguard.

Now the big question that keeps many of us up at night isn’t "Will AI get smarter?" it’s "Will we nurture professionals who can still tell when not to trust a smooth answer?" If we rush to optimise pipelines for speed and fluency, we might end up with a generation that’s excellent at working with AI outputs but poor at recognising their limits.

This is fundamentally a judgment problem, not a pure technology problem. Judgment, so far, is the one thing that doesn’t transfer easily into code.

Conclusion: Perception over automation

Looking back at that ninety‑second unraveling, I realise the story isn’t about AI taking over jobs. It’s about how AI reshapes the very perception of expertise, making the once‑clear line between expert and layperson blur.

As India follows the latest news India on AI, the conversation needs to shift from the dramatic "Will AI replace us?" to the subtler "How do we keep expertise visible and trustworthy when machines can sound just as convincing?" The answer lies in cultivating judgment, encouraging a culture that questions fluency, and building institutions that can adapt faster than past revolutions like the clock‑tower did.

Until then, every meeting where a polished AI report is presented is an opportunity a chance to test the limits of plausibility and to remind ourselves why deep, experienced judgment still matters.

Kerala Elections Assam Elections Puducherry Elections Kerala Voter Turnout Assam Voter Turnout Puducherry Voter Turnout